Paying for an AI subscription isn’t everyone’s preference, and with AI subscriptions rising in cost, it might not be that good an idea in the first place. If you bought an annual Perplexity subscription, you were lied to, and paying $20 a month for any AI subscription will add up quickly.

With quantized models getting more popular, your existing computer has a very good chance of running powerful AI locally. Thankfully, there are tons of free tools around that can let you run plenty of powerful AI models right on your hardware without any subscriptions in sight.

Ollama

Install once, pull models like packages

If you’re comfortable with the command line, Ollama is the fastest way to get a local LLM up and running. Install it, open your terminal, and type ollama run [model name] and you’ve got a local AI running in your terminal window. For example, if you want to run the Llama 3 open-source model from Meta, use this command:

ollama run llama3

Ollama was designed with APIs in mind, and that’s how it works. It spins up a local REST API on your machine that’s compatible with OpenAI’s format. This means that any app or script you’ve built for ChatGPT can be pointed at your local model with minimal code changes. It sets itself up as an entire infrastructure, not just a chatbot, which is one of the reasons why it’s popular among developers.

The app supports over 30 optimized models out of the box, including Llama 3, DeepSeek, Mistral, and Phi-3. It runs on Windows, macOS, and Linux, and uses only a minimal amount of system memory compared to other tools. The only trade-off is that it doesn’t come with a graphical interface, so if the terminal makes you nervous, you’re going to have to try other options.

- OS

-

Windows, macOS, Linux

- Developer

-

Ollama

- Price model

-

Free, Open-source

A lightweight local runtime that lets you download and run large language models on your own machine with a single command.

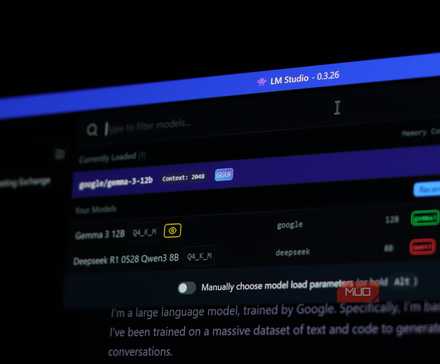

LM Studio

Download and run AI models like apps on your phone

If you prefer working with a GUI, LM Studio is one of the easiest apps to use. It brings a desktop interface where you can browse Hugging Face directly, download quantized models, and tweak system prompts without ever needing a configuration file.

It’s also got features like a headless daemon for server deployments, parallel inference requests with continuous batching, and a new stateful REST API with local MCP server support. For daily use, though, the star feature is still the model discovery. You can search, compare, and download models sorted by size, performance, and compatibility without ever leaving the app’s interface.

LM Studio also includes a local server that exposes an OpenAI-compatible API on your machine, so it works as a backend for other tools too. It supports both Nvidia and Apple silicon GPUs, and includes built-in benchmarking so you can compare how different models perform on your specific hardware. The downside is that it’s an Electron-based app, so it uses more RAM on top of the model’s hardware consumption.

- OS

-

Windows, macOS, Linux

- Developer

-

Element Labs

- Price model

-

Free

A free desktop app that lets you download, run, and chat with large language models locally, no cloud required.

GPT4All

The easiest entry point into offline LLMs

GPT4All is another easy option for quickly getting local AI running on your PC. If you’ve never run a local AI model before and the idea sounds intimidating to you (comparing multiple models on Hugging Face can do that), this is where to start. Download the app, open it, pick a model from the built-in list, and start chatting right away.

The standout feature here is LocalDocs, a built-in RAG (Retrieval-Augmented Generation) system. Point it at a folder of PDFs, text files, or Markdown documents, and it automatically indexes everything. When you ask a question, the model pulls in relevant passages from your files instead of relying solely on its training data.

GPT4All also runs quite well on CPU alone, which makes it ideal for laptops or older machines that don’t necessarily have the powerful hardware required for larger AI models. It’s available on Windows, macOS, and Linux. However, what you get in ease-of-use, you give up in flexibility—there’s no granular control over context windows and quantization settings that Ollama and LM Studio offer.

- OS

-

Windows, macOS, Linux

- Developer

-

Nomic AI

- Price model

-

Free, Open-source

A free, open-source local AI platform that runs large language models on your own PC without cloud dependency.

Jan

Jan brings the ChatGPT experience to your desktop

Jan takes a different approach from other tools in the sense that, rather than just being an LLM runner, it aims to be a complete offline assistant platform with a clean, ChatGPT-like interface. It’s fully open-source and designed from the ground up for privacy, so even though you’re getting what feels like a finished product, your data is secure.

The setup is also dead simple: download Jan, pick a model that fits your hardware (the app helps you choose if you can’t decide), and start chatting. It also integrates directly with Hugging Face, so you can browse and download models like Qwen, Llama, and Mistral right from the UI. Just like Ollama and LM Studio, Jan also sets up a local API server on port 1337 that mimics OpenAI’s API, letting you connect it to VS Code to make a local AI-based coding assistant, integrate with custom scripts, or anything else that works over HTTP.

The program works offline once the model has been downloaded. It also supports Windows, macOS, and Linux, and runs on a universal engine called Cortex. If you want the closest experience to ChatGPT without actually using it, Jan is a pretty solid choice.

- OS

-

Windows, macOS, Linux

- Developer

-

Jan

- Price model

-

Free, Open-source

A free, open-source AI chat assistant that runs local large language models on your PC, no cloud required.

Running local AI is easier than ever

Quantized models are making it possible to run big AI models on modest hardware, and there are plenty of apps that let you enjoy AI locally on your PC. That said, you’ll still need to meet some hardware requirements to get these models running properly.

AI doesn’t have to cost you a dime—local models are fast, private, and finally worth switching to.

Generally speaking, eight gigabytes of memory and a modern CPU will get you going. But for larger models and a better experience, it’s recommended that you aim for 16 GB RAM, a dedicated GPU with at least 8 GB VRAM, and an SSD for storing models and data.

Paying for AI subscriptions is now a choice, not a necessity. Whether you’re a developer who wants API control, a privacy-conscious user who doesn’t want data leaving your machine, or someone who just wants to experiment without paying for a subscription, there’s an app that’ll let you use local AI in a manner that fits your needs.