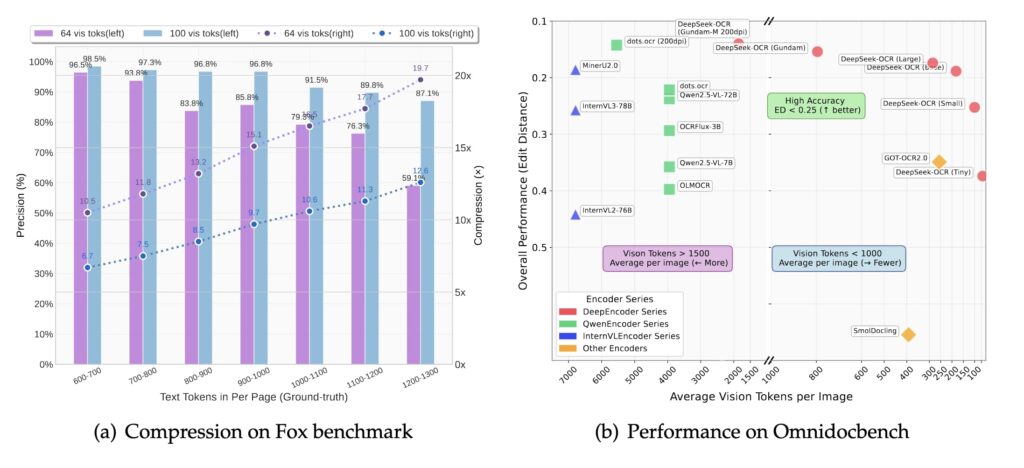

DeepSeek’s announced OCR (Optical Character Recognition) model compresses text-heavy data into images and reduces vision tokens per image by up to 20x while retaining 97% accuracy (10x compression) or ~60% at 20x. This outperforms competitors on efficiency-performance charts.

arxiv – DeepSeek-OCR: Contexts Optical Compression

We present DeepSeek-OCR as an initial investigation into the feasibility of compressing long contexts via optical 2D mapping. DeepSeek-OCR consists of two components: DeepEncoder and DeepSeek3B-MoE-A570M as the decoder. Specifically, DeepEncoder serves as the core engine, designed to maintain low activations under high-resolution input while achieving high compression ratios to ensure an optimal and manageable number of vision tokens. Experiments show that when the number of text tokens is within 10 times that of vision tokens (i.e., a compression ratio < 10x), the model can achieve decoding (OCR) precision of 97%. Even at a compression ratio of 20x, the OCR accuracy still remains at about 60%. This shows considerable promise for research areas such as historical long-context compression and memory forgetting mechanisms in LLMs. Beyond this, DeepSeek-OCR also demonstrates high practical value. On OmniDocBench, it surpasses GOT-OCR2.0 (256 tokens/page) using only 100 vision tokens, and outperforms MinerU2.0 (6000+ tokens per page on average) while utilizing fewer than 800 vision tokens. In production, DeepSeek-OCR can generate training data for LLMs/VLMs at a scale of 200k+ pages per day (a single A100-40G). Codes and model weights are publicly accessible at this http URL.

This eases LLM bottlenecks in long-context tasks (large codebases) by fitting more info into shorter windows, avoiding performance drops.

It speeds up and cheapens model training—crucial for China amid GPU shortages—echoing.

It conveys dense ideas (text, emotions, visuals) compactly. Enables generating 200k+ pages of training data daily for LLMs/VLMs.

It parses charts (financial reports to structured data), chemical formulas (to SMILES format), geometric figures, and natural images; retains general visual/language skills (description, detection).

Andrej Karpathy’s says the Deep seek paper is a good OCR model and is intrigued by pixels vs. text tokens. Pixels may be superior inputs for LLMs, rendering text as images for richer, more efficient processing.

Suggests ditching tokenizers. We could shift to inputs as images (even for text) for holistic processing.

Elon’s view is 99%+ of future AI I/O will be photons (light-based), as reality fundamentally runs on them.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.