Pinecone, arguably the company most synonymous with the fast-growing vector database industry powering many generative AI large language model (LLM) data deployments in the enterprise, has announced the next stage of the company.

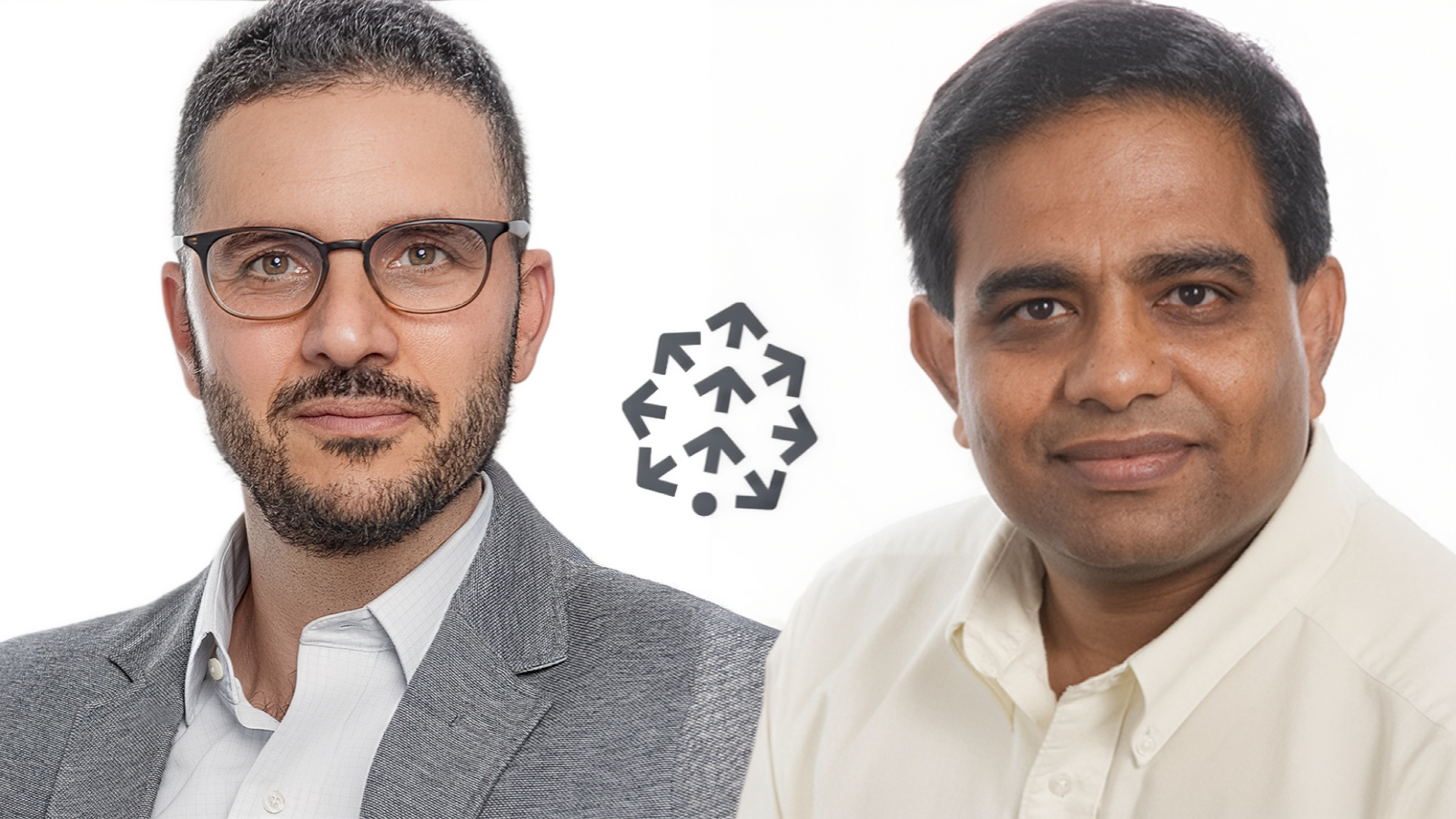

On Monday, the New York City-based startup delcared that its founder and longtime CEO, Edo Liberty, will move into a new role as Chief Scientist. Taking his place at the helm as CEO is Ash Ashutosh, a former Google global sales director and veteran entrepreneur with four decades of experience in data infrastructure.

In a bid to showcase the power of its vector database technology and framework for building AI chatbots and agents atop it, Pinecone is forgoing announcing this news today in a conventional static press release. Instead, it is directing interested readers to a new custom chatbot experience where they can converse and ask questions of a Pinecone AI press release assistant that leverages a vector database, Pinecone frameworks, and OpenAI's GPT-4.1 LLM.

The move may signal Pinecone’s intent to double down on its core technical vision while also professionalizing its commercial operations.

But it also raises new questions about how the company will navigate heightened competition, intensifying scrutiny of vector search technology, and the prospect of a major change in ownership.

Last week, VentureBeat conducted an exclusive co-interview with Liberty and Ashutosh to address the latest chapter in its story of growth and adaptation in the increasingly competitive vector database and larger machine learning-driven AI marketplace.

A New CEO Steps Up

According to the announcement, Ashutosh will lead Pinecone’s “growth phase” while Liberty refocuses on research and innovation as Chief Scientist.

For Liberty, who has served as both public face and technical visionary since founding Pinecone in 2019, the handoff marks a significant shift. “It is critical for Pinecone that we keep pushing the boundaries of context-aware AI and search, and that’s where I’ll be focusing my energy,” he said in a statement sent to VentureBeat.

"We’re doubling or tripling down on our mission to make AI knowledgeable—investing deeply in research while accelerating the core database business," Liberty told me in our interview last week. "I’m going to personally lead the research, and we’ve been looking for an amazing leader to spearhead growth of the vector database. We’re delighted that Ash is joining us."

As a a longtime entrepreneur and technologist with deep experience in enterprise data and cloud infrastructure, Ashutosh brings a resume heavy with enterprise credibility.

He founded Actifio in 2009, building it into a leader in copy data management before its 2020 acquisition by Google, where he went on to lead global solution sales for cloud data products. Earlier in his career he co-founded AppIQ, which Hewlett-Packard acquired in 2005, and later served as chief technologist for HP’s StorageWorks division. He has also worked as a partner at Greylock Partners and continues to advise startups as a founding pillar of Pillar VC in Boston.

Ashutosh holds a master’s degree in computer science from Penn State University and a B.Tech in electronics and instrumentation engineering from the Kakatiya Institute of Technology & Science.

In his first comments as Pinecone CEO, Ashutosh praised Liberty’s technical leadership and framed Pinecone’s mission as one of execution.

“Vectors are the new language of AI, and Pinecone is the clear leader building the core infrastructure that bridges the human world and the computational world,” Ashutosh said in our interview. “The AI hype cycle is ending and the business cycle is starting—people are moving from what’s possible to what’s practical” .

There is a precedent in Silicon Valley for this kind of founder-to-operator transition. Google’s co-founders Larry Page and Sergey Brin stepped aside in 2001 at the urging of investors like John Doerr, bringing in Eric Schmidt as CEO to provide what was often described as “adult supervision.”

Schmidt steered Google through a decade of explosive growth, overseeing its IPO and the launches of Gmail, YouTube, Android, and its lucrative ad infrastructure, before handing the reins back to Page in 2011.

Google’s trajectory shows how such changes can work out — founders stepping into technical or visionary roles while experienced operators run the business.

But it also created moments of anxiety about direction, and Pinecone’s customers may be wondering the same now: does this mark a new era of stability, or uncertainty about the company’s future?

Pinecone’s Strategic Crossroads

The leadership shift comes at a delicate moment. Pinecone, one of the first and best-funded startups in the vector database space (it has raised a reported $138 million across three rounds since 2021), is reportedly exploring a potential sale.

Subscription tech news outlet The Information recently reported that the company has engaged bankers to evaluate options, with speculation suggesting a valuation north of $2 billion — well above its last $750 million valuation.

This comes after Pinecone was recognized by Fast Company earlier this year as one of the “World’s Most Innovative Companies of 2025” for enterprise tech, citing its cascading retrieval, reranking features, and serverless architecture.

These developments highlight Pinecone’s dual position: an innovator in enterprise AI search and a possible target in a wave of consolidation around retrieval-augmented generation (RAG) infrastructure.

A vector database is a type of system built to store and search through vectors — mathematical representations of text, images, audio, or other data. Instead of matching exact keywords, vectors let software find “similar” items by measuring distance in a high-dimensional space. For generative AI applications, especially those RAG, this is essential: it allows large language models (LLMs) to look up relevant documents, knowledge bases, or media files in real time and ground their responses in reliable, domain-specific information. In short, vector databases act like the long-term memory of an AI system, helping reduce hallucinations and improving accuracy for everything from chatbots to enterprise search tools.

The vector database market has moved quickly from its research roots in libraries like FAISS and Annoy to a mainstream data technology. As of 2025, the space is worth roughly $3 billion and growing at more than 20% annually, with forecasts ranging from $7 billion to $10 billion by the early 2030s. Dedicated vendors such as Pinecone, Weaviate, Zilliz/Milvus, Qdrant, and Vespa continue to innovate on performance and large-scale retrieval, while major platforms — Microsoft, Google Cloud, AWS, Databricks, Snowflake, and MongoDB — have added vector search directly into their databases and AI stacks. The result is a market that has shifted from niche startups to a crowded, highly competitive field where vector search is now a standard feature of modern data infrastructure. The main challenges today are differentiation in the face of commoditization, keeping storage and latency costs under control, and proving retrieval quality in real-world generative AI deployments.

Asked whether those sale rumors signaled a shift in strategy, Liberty countered: "Ash joining and the effort we’re putting into growing the company — and bringing the future of knowledgeable AI, data, search, and agent automation to as many developers and companies as possible — should be signal enough of what we want to do."

Debating the Limits of Vector Search

At the same time, fresh research from Google DeepMind has sparked heated debate in the retrieval community.

A recent paper from the AI research labe, entitled "On the Theoretical Limitations of Embedding-Based Retrieval," argues that for a given embedding dimension, there exist queries whose relevant document sets are mathematically impossible to represent — meaning some documents in an index can never be retrieved no matter how the model is trained.

Menlo Ventures prinicipal Deedy Das, posting on X, called this proof that “plain old BM25 from 1994 outperforms vector search on recall,” framing it as evidence that older keyword-based search methods may still be more reliable than newer AI-driven approaches in some scenarios.

That argument resonated widely online because it taps into a familiar narrative: that flashy new AI systems may not actually surpass tried-and-true methods when pushed to their theoretical limits.

Liberty dismissed that interpretation in a reply on X and elaborated in comments to VentureBeat: “The article doesn’t say what people think. It’s a basic combinatorial result about very low-dimensional spaces — 10, maybe 50, 100. Nobody serious works in those dimensions. With reasonable dimensions — 500 and above — even random embeddings can retrieve almost any subset you want. Scientifically correct, practically irrelevant to vector search” .

The Broader Tension

The tension between the paper’s findings and Liberty’s defense illustrates the gap between theoretical limits and practical utility.

On one hand, DeepMind’s work is a reminder that embedding-based systems can’t capture every possible relationship between queries and documents, no matter how much training data they ingest or how big the model grows.

On the other hand, Liberty’s argument underscores that most real-world applications don’t require covering every corner case.

For Pinecone, which is positioning itself as both a reliable infrastructure provider and a forward-looking innovator, this debate is central: it must convince customers and potential acquirers that its technology is not only theoretically sound but also robust enough to deliver real value at scale.

For Pinecone’s customers, today's leadership transition could signal stability or uncertainty, depending on how it is received.

The company says it supports more than 5,000 customers across industries ranging from finance and media to pharmaceuticals and AI technology.

Case studies highlighted in the announcement point to performance at scale: CustomGPT.ai, for instance, runs searches across 400 million stored vectors with latency below 20 milliseconds; sales software company Gong stores billions of vectors representing transcribed calls; and Obviant reports a 30% increase in relevance after adopting Pinecone.

These metrics suggest Pinecone has proven value in real-world enterprise applications even as theoretical debates continue.

Ashutosh struck a pragmatic note: “What you hear in the news are the bursts and the astounding claims. What you don’t hear is people quietly building real things that change how their business runs. That’s my focus” .

He also drew a sharp distinction between bundled platforms and Pinecone’s approach: “Some platforms are ‘good enough’ for a small chatbot. Pinecone has built the most scalable vector database as a foundation for serious businesses. The real monetization is with best of breed—and that’s our focus” .

Still, the transition raises questions. Why make this change now, just as Pinecone is rumored to be in acquisition talks? Does Liberty’s move into a Chief Scientist role reflect a desire to protect the company’s technical edge during a sale process? Or does it suggest that scaling Pinecone commercially requires a different type of leadership than the founder could provide?

What's Next for Pinecone?

Pinecone’s announcement comes at a time when the company is under both opportunity and pressure: an expanding customer base, recognition for innovation, but also scrutiny over its technology and speculation about its ownership.

By bringing in a seasoned operator like Ashutosh and repositioning Liberty as Chief Scientist, the company may be trying to balance credibility with investors and enterprise buyers while preserving its identity as a research-driven innovator. Whether that balance holds—or whether it deepens questions about Pinecone’s trajectory—will likely become clearer in the coming months.