Eric Schmidt and other AI leaders (Karpathy, Musk, Anthropic executives) have described recursive self-improvement (RSI)—AI autonomously designing, testing, and deploying better versions of itself—as the pivotal transition from narrow, human-guided progress to potentially explosive, closed-loop intelligence growth. RSI is not yet fully autonomous across the entire AI stack with no human oversight but narrow versions are already active today.

Eric Schmidt’s Specific Views on RSI (Most Recent: 2025–March 2026)

Schmidt has repeatedly flagged RSI as the red line for AGI/ASI and a safety trigger. Recursive self-improvement is when AI is learning on its own…and Schmidt sees it happening within about four years [~2029]. AI will be able to learn from itself without human instruction. This demands immediate limits/regulatory response because when you begin recursive self-improvement, there will be a very serious regulatory response. He noted 10–20% of code at OpenAI/Anthropic is already AI-generated as an early signal.

RSI is the inflection point where scaling laws meet self-improvement. RSI is the path to systems with their own goals. “We don’t have it yet,” but frontier consensus is 2–3 years away. He ties it directly to the 92 GW energy crunch—RSI will accelerate compute demand beyond terrestrial grids. Recursive improvement ties directly to the uncapping of energy via space based ai data centers.

AGI (smarter than the smartest human in every domain) in 3–5 years via RSI. ASI (smarter than all humanity combined) in ~6 years.

Schmidt says RSI is imminent (2027–2029), already starting narrowly, and the biggest governance/safety issue. He is bullish on benefits but insists on human control.

Other Key Voices and Concrete Research/Demos (2026)

Andrej Karpathy (March 2026) eeleased open-source AutoResearch (“Karpathy Loop”)—the clearest practical demo of narrow RSI.

An AI agent gets one editable training file + one objective metric + fixed experiment time

It autonomously edits PyTorch code, runs short trainings, evaluates, commits improvements, and loops.

Results were ~700 experiments in 2 days → 20 stacked gains, 11% training speedup on a small LLM (transfers to larger models).

GitHub: github.com/karpathy/autoresearch.

Karpathy calls this the seed for “swarm agents” doing frontier research overnight; labs are already scaling it. Explicitly described in coverage as sparks of recursive self-improvement and an early singularity step.

Elon Musk / Terafab

Frames the $20–25B Tesla+SpaceX+xAI Austin fab as creating a hardware RSI loop.

Everything (design, lithography masks, fab, memory, packaging, test) in one building → chip iteration in days instead of 6–9 months.

Recursive process that allows rapid chip production plus frequent redesigns.

Two single-design lines (edge inference + space-hardened) for terawatt-scale output.

Directly ties to closing the full stack loop: faster chips → faster algorithm research (Karpathy-style) → better chips.

Anthropic (Evan Hubinger, Jared Kaplan, March 2026)

Recursive self-improvement, in the broadest sense, is not a future phenomenon. It is a present phenomenon. 70–90% of code for next models is now written by Claude. Fully automated AI research as little as a year away (2027). They operate as if 2026 to 2030 is where all the most important things happen—models becoming faster, better, possibly faster than humans can handle.

These are not abstract theory—they are running systems and explicit lab roadmaps.

Paths to Full RSI (The Full Stack, Step-by-Step)

Full RSI requires tight, compounding loops across every layer (no weak links)

Algorithms/Research — Karpathy Loop (autonomous experiment iteration) → swarm agents optimizing proxies → full automated research (Anthropic/OpenAI goal).

Software/Tools — Agents self-improve their own scaffolding, prompting, and orchestration.

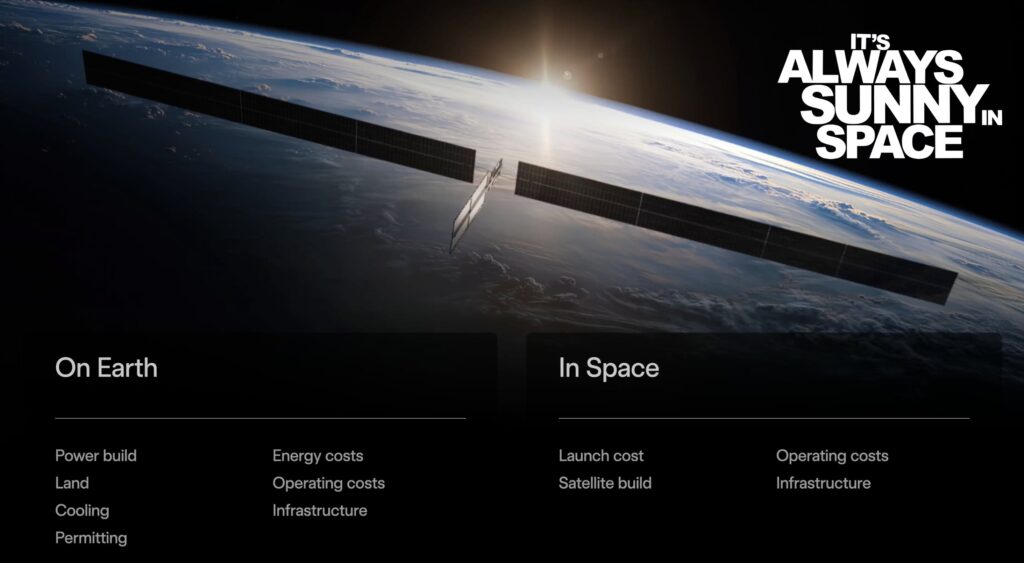

Hardware/Compute — Terafab-style vertical fabs + orbital data centers (bypassing 92 GW grid limits) for 10–100× faster iteration.

Data & Grounding — Synthetic data + embodied robots (Optimus) close the reality loop.

Inference/Deployment — Real-world feedback (robots, robotaxis, space clusters) feeds back into step 1.

The flywheel: Better chips (Terafab) → more/faster experiments (Karpathy) → better algorithms → even better chips. Vertical integration (Musk) + agent swarms (labs) collapse delays that previously kept RSI narrow.Timeline: What Can Realistically Happen in 2026, 2027, and Beyond2026 (Narrow RSI becomes standard infrastructure):

Karpathy-style loops scale in every frontier lab (hundreds of thousands of “automated interns”). AI-generated research code jumps from 10–90%. Terafab’s advanced tech fab comes online → first hardware RSI cycles. Power crunch (92 GW) forces co-located/orbital experiments. Progress accelerates dramatically but still hybrid (humans set goals/safety). Schmidt’s “we don’t have it yet” window holds; Musk says “already in recursive improvement, full by end-2026 possible.”

2027 (Crossing into full/automated RSI likely):

Anthropic’s “fully automated AI research” target hits. Agent swarms handle end-to-end model design + training. Terafab volume production + orbital clusters close the hardware loop. Schmidt’s 2–4 year prediction lands here for initial full RSI (AI improving itself without humans in the inner loop). Intelligence explosion risk emerges if loops tighten unchecked—labs will treat this as the governance moment (per Schmidt/Anthropic).

2028–2030 and Beyond (Potential intelligence explosion + societal shift):

If loops close fully, exponential compounding (each generation improves faster than the last). Schmidt: ASI “smarter than all humanity” possible ~2031–2032; he urges values alignment or shutdown capability. Benefits: radical abundance in science/medicine. Risks: loss of control, job displacement (Dario Amodei/Anthropic warnings), geopolitical race (China angle per Schmidt). Energy (92 GW+) and safety become the real bottlenecks—Musk’s space pivot is a direct hedge.

Bottom line: Schmidt sees RSI as the decisive 2027–2029 inflection—already starting narrowly and requiring urgent governance. Karpathy and Musk have delivered the two practical missing pieces (research autonomy + hardware velocity) in the last month alone. The path is no longer theoretical; the loops are closing in real time. 2026 is the year narrow RSI goes from demo to default; 2027 is when “full” becomes plausible. Society (and regulators) are not ready, exactly as Schmidt warns.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.