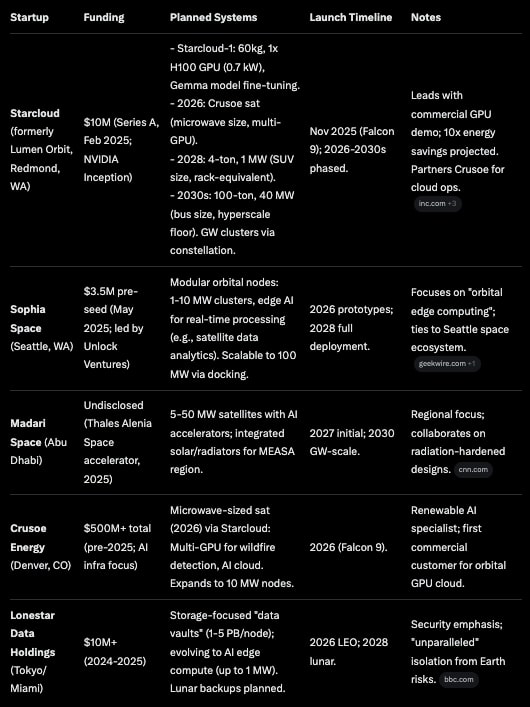

AI data centers on earth face bottlenecks like intermittent solar power, massive water usage for cooling (up to 1.7 million tons per 40 MW facility over a decade), and grid constraints. Space offers near-constant solar exposure (up to 95% capacity factor in sun-synchronous orbits), zero water needs, and rapid deployment (2-3 months vs. years on Earth). However, challenges include high upfront launch costs, radiation exposure to hardware, and efficient heat dissipation in vacuum. Jeff Bezos’ predict gigawatt (GW)-scale orbital facilities within 10-20 years, potentially 20x cheaper than Earth-based equivalents.

Starcloud-1 as a pivotal demo. It is a 60kg satellite with an NVIDIA H100 GPU (2,000 teraflops for AI workloads, 1,000x more powerful than prior ISS systems like HPE’s Spaceborne Computer-2 at 2 teraflops).

At 60 kilogram per H100 chip, a Falcon 9 could launch about 300 GPU chips.

At 60 kilograms per GPU chip, a SpaceX Starship with 200 tons of reusable payload could launch 3000 chips with solar and heat radiators.

Reducing to half of the per chip mass from the first of its kind demo would increase Starship deployment to 6000 chips or about 6-12 MW.

More challenging would be improving by 2030 to about 40,000 GPU chips and 80 MW per Starship flight.

With 40 MW and 20000 two kilowatt B200/Rubin GPUs, then 50 Starship launches per gigawatt and 250 for 5 gigawatts.

Starcloud plans to build a 5-gigawatt orbital data center with super-large solar and cooling panels approximately 4 kilometers in width and length.

Orbital solar at $0.002/kWh vs. $0.045/kWh terrestrial, with no atmospheric losses (40% more intensity). Philip Johnston, cofounder and CEO of Starcloud which is based in Redmond, Washington.

Neocloud Crusoe is going to space. Crusoe with $1.4 billion in funding plans to deploy on a Starcloud satellite scheduled to launch in late 2026, and offer limited GPU capacity from space by early 2027. Mubadala Capital and Valor Equity Partners that saw Crusoe valued at $10bn. A number of companies plan to deploy data centers in space, including Axiom Space, NTT, Ramon.Space, and Sophia Space.

This November, history changes.

An NVIDIA H100 GPU—100 times more powerful than any GPU ever flown in space—launches to orbit.

It will run Google’s Gemma—the open-source version of Gemini. In space. For the first time.

First AI training in orbit. First model fine-tuning in… pic.twitter.com/WtAv9oisUO

— Ask Perplexity (@AskPerplexity) October 27, 2025

A 40 MW facility over 10 years has operational costs cut from $167M (including $140M energy) to $8.2M (mostly launch).

There are problems of radiation-based cooling is inefficient without convection, requiring massive radiators that add mass and cost.

For AI GPUs like the H100 (700W TDP), waste heat (up to 80% of input power) must be captured via liquid cooling loops and piped to deployable radiators, often aligned with solar arrays for dual-use structures.

Applications for Space-Based Data Centers

An early use case for extraterrestrial data centers is the analysis of Earth observation data, which could inform applications for detecting crop types and predicting local weather.

Real-time data processing in space offers immense benefits for critical applications such as wildfire detection and distress-signal response. Running inference in space, right where the data’s collected, allows insights to be delivered nearly instantaneously, reducing response times from hours to minutes.

Earth observation methods include optical imaging with cameras, hyperspectral imaging using light wavelengths beyond human vision and synthetic-aperture radar (SAR) imaging to build high-resolution, 3D maps of Earth.

SAR, in particular, generates lots of data — about 10 gigabytes per second, according to Johnston — so in-space inference would be especially beneficial when creating these maps.

Heat Radiation in Space-Based Systems

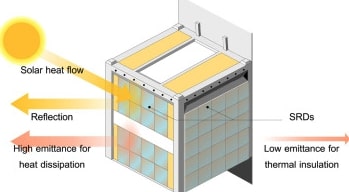

Space’s vacuum prohibits convection or conduction to air/water, so heat management relies on thermal radiation: infrared emission from large radiator surfaces into deep space (near-absolute zero). For AI GPUs like the H100 (700W TDP), waste heat (up to 80% of input power) must be captured via liquid cooling loops and piped to deployable radiators, often aligned with solar arrays for dual-use structures.

Coolant (e.g., dielectric fluids) absorbs heat from chips, circulates to finned panels coated in high-emissivity materials (ε > 0.9). These emit ~1 kW/m² at 300K, but scale poorly— a 5 GW center needs ~4 km² of radiators (6 sq mi), folded for launch.

Adaptive designs, like switchable radiative devices (SRDs), dynamically tune emissivity for efficiency.

Space radiators are inefficient vs. Earth’s 10x faster convection. Space radiators must be 10-20x larger.

High-power densities (>40 kW/rack) risk hotspots; cosmic rays can degrade materials. Solutions include redundant loops and AI-optimized thermal modeling. Starcloud’s demo tests if commercial GPUs tolerate this with minimal shielding (liquid blocks double as protection).

Overall, feasible for LEO but scales to GW only with advances in lightweight, deployable panels.

Results in Engineering – Adaptive thermal radiation design for spacecraft heat dissipation

Aerospace smart radiator devices (SRDs) offer temperature-responsive dynamic radiation cooling capabilities that are poised to revolutionize traditional spacecraft thermal management systems and enhance onboard intelligence. This paper systematically evaluates the heat transfer properties, performance, applicability, and energy-saving benefits of SRDs. Simulation results demonstrate that SRDs can reduce temperature fluctuations in geostationary satellites by up to 57 % and the maximum power-saving potential can approach 90 % of the optimized satellite’s total power consumption. In application scenarios, flat-sat equipped with SRDs can reduce solar panel area by approximately 23 %. These findings highlight the remarkable potential of SRDs to extend the feasible range of thermal control and improve the energy efficiency and sustainability of space exploration missions. Furthermore, the integration of deep learning enables an inverse determination of surface radiative properties based on thermal targets, evolving passive thermal control system design into a more efficient, flexible, and customizable paradigm.

SpaceX’s Competitive Edge

SpaceX holds a ~90% LEO launch market share, with Falcon 9 rideshares at $2K/kg vs. $20K+ competitors. SpaceX Starship can drop the cost of going to space to $50-100 per kilogram. Starcloud-1 is using a SpaceX rideshare at ~$300K launch.

Starlink’s 8,800+ sats provide low-latency backhaul (laser interlinks for orbital data routing), bypassing ground station bottlenecks.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.